Advanced Assessment Methods and the Intersection of Variables

Thank you for joining me once more for my current blog series regarding advanced assessment methods for the data gathered by your PA program. One of our main purposes behind conducting the advanced assessment is to fulfill the requests of the ARC-PA commission as you are completing your SSR. The Commission desires that you show the linkage between your appendix sections. Doing so demonstrates your understanding of the complexity of a PA program and how all the factors interrelate.

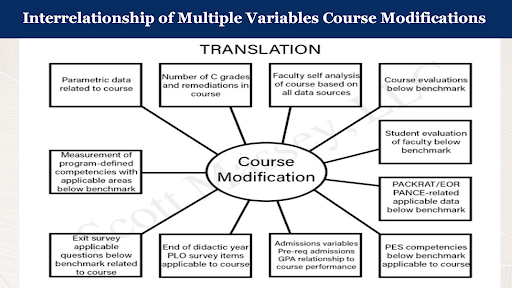

I will give examples here using spider diagrams, a straightforward way of visualizing how many factors relate to one key process. This is similar in theory to the cause-and-effect mapping that I discussed in my previous blog. In that scenario, we attempted to trace a problem back to its root cause. You may use an illustration such as the one below in conjunction with cause-and-effect mapping, for example, to determine which variables to include on a cause-and-effect map. But knowing interrelationships is also valuable, as it allows you a 20,000-foot view of many possible contributing factors in a complex process.

This spider diagram looks at modifying a course through an actual data-driven approach. This gives you the variables that are necessary to document the necessity and the target for the modification. The whole concept behind course modification can be the result of many different data sets. The key thing is not to just modify a course based on your intuition. There is data available to support decisions.

So, for example, you may consider the number of C-grades, and the faculty and course evaluations. You may look at the course outcomes. If that course is teaching certain organ systems, you will want to look at benchmark exams or landmark exams in terms of performance – are they meeting benchmarks? Are the scores lower in that area of the course’s subject matter in subsequent exams? Are there a high number of C-grades and needs for remediation? Even other things which oftentimes are not directly attributable, like the end of didactic year program learning outcomes, exit surveys, or evaluations of faculty, might give you some clues about whether course modification is necessary, and what form it should take.

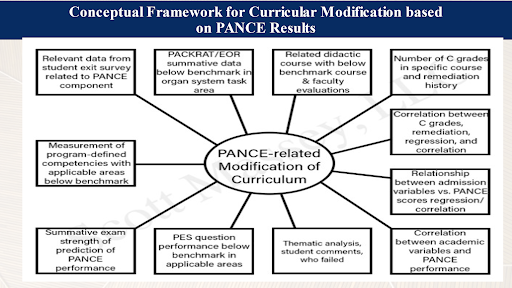

In this example, the question is about PANCE-related modification: If pass rates or percentage rates on the PANCE are not what they should be, is curriculum modification the answer? You examine related didactic courses with the low benchmarks. You look at how C grades and remediation history correlate.

Whenever you are thinking about modifying the curriculum relating to PANCE results, you have to think through these elements before you take those steps. It does not mean that you have to dig deep very deeply into statistics. At least, you must use predictive, then look at academic variables and PANCE performance. I like to use regression here. If clinical medicine I have a Pierson coefficient, for example, a .56, and then you run stuff like regression, and the variance is 35%, then you have a strong predictor course. Remember that both of those run hand in hand.

Remember, also, that one isolated person does not conduct this examination. Rather, the committee structure drives this process, providing critical analysis. When a curriculum change is determined as being necessary, there is data to support the reasoning as well as information to present in your SSR.

Now, if that final piece of my explanation just left you in the dark, do not worry! In upcoming blogs, I will go into more detail on how to employ statistics in a meaningful way. Of course, Massey Martin, LLC offers a free four-part webinar in Advanced Assessment Methods, where I cover these subjects in depth. In my next blog, we will discuss the usefulness of stratification and heat maps of your data to find actionable areas or areas of concern in your program.